Goal: build a mini research dashboard that trains a model and visualises results.

- Loads a real dataset

- Trains a simple classifier

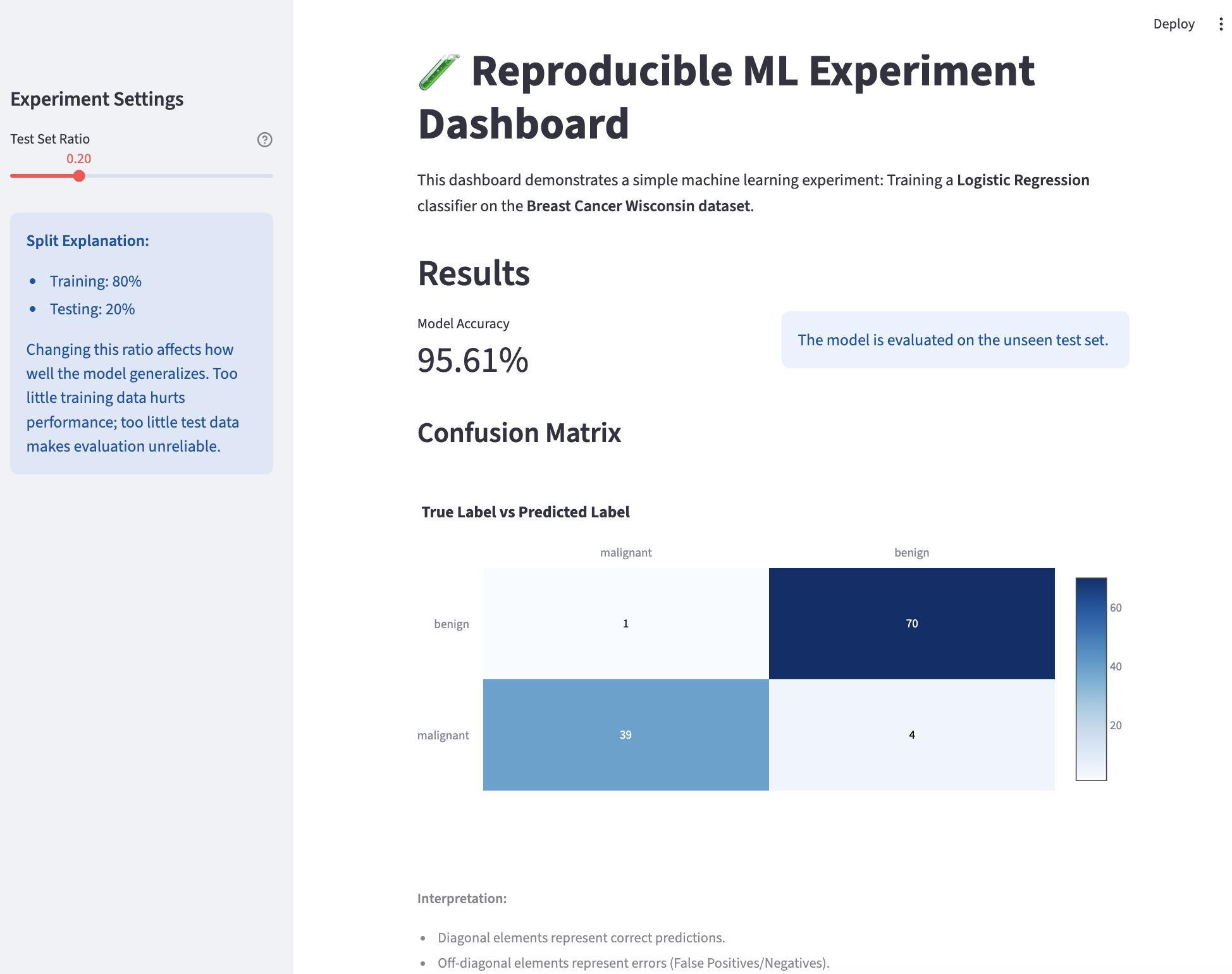

- Displays accuracy and confusion matrix

- Explains results in plain language

Step 1: Prompt the Agent

prompt

Build a small reproducible machine learning experiment dashboard.

Requirements:

- Use a dataset from scikit-learn (Breast Cancer or Digits dataset)

- Train a simple classifier (Logistic Regression)

- Split data into train and test sets

- Display:

1. Accuracy score

2. Confusion matrix

3. Short explanation of the results

- Build the app using Streamlit

- The code must run end to end

Before coding:

- Explain the experiment design

- Justify the dataset and model choice

Then provide the full code.Step 2: Agent Solution

The following code is the complete, runnable solution generated by the agent.

Step 2: Run and Verify

terminal

streamlit run app.py

Agent generated app

Step 3: Try it Yourself

prompt

Extend the dashboard:

- Add a button to select the model type (Logistic Regression, Decision Tree, or Random Forest)

- Add more visualizations to compare the performance of different models

- Add a short explanation of why different models have different performance